Managing the Data of 1.65 Billion Users

With over 1.65 billion users, Facebook has to manage what most tech companies would regard as an overwhelming amount of data. The company isn't shy about showing off the impressive facilities that house its servers, offering glimpses into its mammoth data centers at regular intervals.

Thanks to Facebook's founder and CEO we now have our first detailed look at the firm's most unique location.

The scale of Facebook's data challenge:

- 1.65 billion users: Massive global user base to serve

- Overwhelming data volumes: Beyond what most tech companies handle

- Multiple data centers: Global infrastructure to support platform

- Constant growth: Users and data increasing daily

- Complex infrastructure: Technology that keeps the world connected

- Transparency approach: Facebook regularly shares facility details

Location: 70 Miles from the Arctic Circle

The Lulea data center is tucked deep within the forests of northern Sweden, just 70 miles south of the Arctic Circle.

Mark Zuckerberg shared a series of annotated photos of the building, its interior, and its staff on his official Facebook page, which boasts over 81 million followers.

Strategic location details:

- Location: Lulea, northern Sweden

- Proximity to Arctic Circle: Just 70 miles south

- Setting: Deep within Swedish forests

- Climate advantage: Extremely cold temperatures year-round

- Remote placement: Away from populated areas

- Mark Zuckerberg's reveal: Shared on his page with 81 million followers

Massive Scale: Six Football Fields

Facebook started work on the massive project in 2011, and the completed building as it now stands encompasses the size of six football fields.

Construction timeline and scale:

- Project start: 2011

- Size: Six football fields

- Status: Completed and operational

- Investment: Massive multi-year project

- Infrastructure: Purpose-built for Facebook's needs

- Expansion potential: Room for future growth

Natural Cooling System

The local temperature — which can plummet to below 50 degrees on most days — actually works in the site's favor.

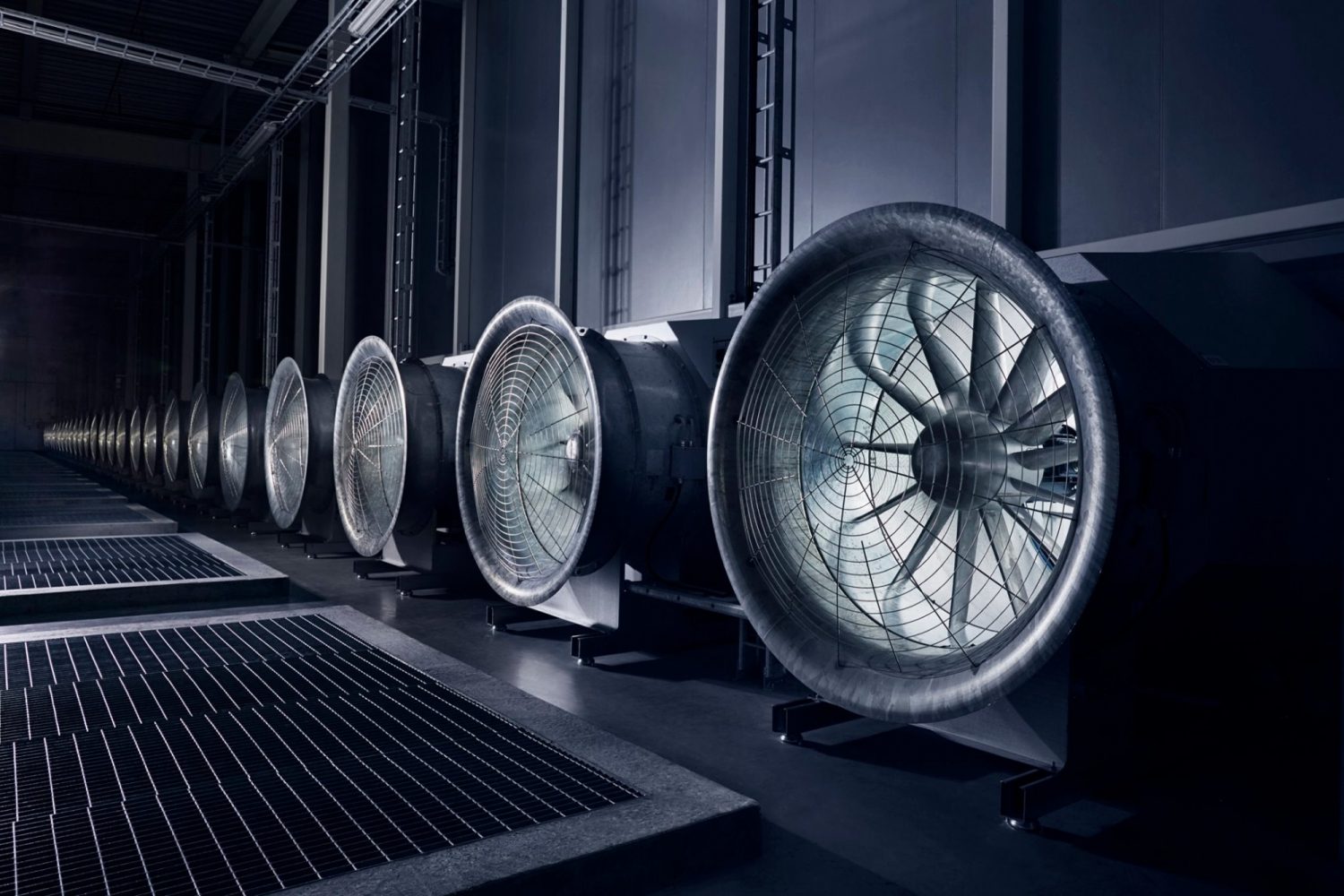

According to Zuckerberg, the outside air is pulled within with the help of large fans to help "naturally cool" the thousands of warm servers dotted along the center's massive hallways.

How the cooling system works:

- Arctic temperatures: Below 50 degrees Fahrenheit most days

- Large fans: Pull frigid outside air into facility

- Natural cooling: No energy-intensive air conditioning needed

- Free cooling: Climate provides the refrigeration

- Thousands of servers: All generating heat constantly

- Massive hallways: Air circulation throughout facility

- Cost savings: Dramatic reduction in cooling energy

Renewable Energy from Hydro-Electric Plants

The location also provides a renewable energy source, courtesy of the hydro-electric plants that operate on nearby rivers in the picturesque region.

"The whole system is 10 percent more efficient and uses almost 40 percent less power than traditional data centers," claims Zuckerberg.

Energy efficiency achievements:

- Renewable energy: Powered by nearby hydro-electric plants

- River-based power: Sustainable water-powered electricity

- 10% more efficient: Overall system improvement

- 40% less power: Compared to traditional data centers

- Environmental benefit: Clean, renewable energy source

- Cost reduction: Lower ongoing operational expenses

- Picturesque setting: Beautiful northern Swedish rivers

Open Compute Project Technology

The technology that is housed inside the facility is based on innovative, open-sourced designs from the Open Compute Project (a partnership that sees tech giants share computer infrastructures in order to accelerate their growth).

What is the Open Compute Project:

- Tech giant partnership: Major companies sharing designs

- Open-source approach: Freely available infrastructure designs

- Accelerated growth: Shared knowledge speeds innovation

- Industry collaboration: Competitors working together

- Hardware designs: Servers and infrastructure blueprints

- Efficiency focus: Optimizing performance and power use

Technology implemented at Lulea:

- Server designs: Custom, open-source server hardware

- Power distribution: Innovative electrical systems

- Reduced to basics: Minimal components to prevent overheating

- Quick access: Easy to reach and repair

- Modular design: Standardized, replaceable components

- Heat management: Designed to stay cool

Rapid Repair Times: From 1 Hour to 2 Minutes

"A few years ago, it took an hour to repair a server hard drive. At Lulea, that's down to two minutes," writes Zuckerberg.

Maintenance efficiency:

- Traditional repair time: 1 hour for hard drive replacement

- Lulea repair time: 2 minutes for same task

- 30x faster: Dramatic improvement in efficiency

- Minimal downtime: Quick repairs mean better uptime

- Design achievement: Smart infrastructure enables speed

- Staff efficiency: Technicians can service more equipment

Privacy Protection: Hard Drive Destruction

In terms of protecting its users' privacy, Facebook ensures it crushes obsolete hard drives, assigning the critical task to a staff member.

Data security measures:

- Physical destruction: Obsolete drives are crushed

- Dedicated staff: Critical task assigned to team members

- Privacy protection: Ensuring user data can't be recovered

- Permanent disposal: Complete drive destruction

- Security protocol: Systematic approach to old hardware

- User trust: Demonstrating commitment to privacy

Life Inside the Data Center

Life inside the daunting data center does have its perks. Aside from the stunning views, and state-of-the-art tech, employees are also provided with scooters to maneuver around the main data hall — again illustrating just how huge the Lulea facility is.

Employee experience:

- Stunning views: Beautiful northern Swedish scenery

- State-of-the-art tech: Working with cutting-edge equipment

- Scooters provided: To navigate the massive facility

- Scale illustration: Need vehicles to get around inside!

- 150 staff members: Total workforce

- Modern workplace: Unique work environment

Frequently Empty Halls

Overall, 150 staff members work inside the building, but Zuckerberg describes the halls as "frequently empty" due to the lack of maintenance the efficient servers require.

Why the halls are empty:

- Highly efficient servers: Require minimal maintenance

- Reliable technology: Systems run without constant intervention

- Automated systems: Much of the operation is self-managing

- 150 staff for massive facility: Relatively small workforce needed

- Design success: Technology that doesn't need babysitting

- Empty hallways: Eerie but efficient

Aesthetic: Sci-Fi Film Setting

The images depict a facility that matches its sparse surroundings with its steely interiors. Combined with the lack of a human presence, the photos seem like they're from some long-forgotten expressionist sci-fi film.

Visual character:

- Steely interiors: Industrial, minimalist design

- Sparse surroundings: Matching the remote Swedish forests

- Minimal human presence: Empty, echoing hallways

- Sci-fi aesthetic: Like a futuristic movie set

- Expressionist quality: Stark, dramatic visuals

- Long-forgotten feel: Timeless, otherworldly atmosphere

The Invisible Infrastructure

"You probably don't think about Lulea when you share with friends on Facebook, but it's an example of the incredibly complex technology infrastructure that keeps the world connected," writes Zuckerberg.

The hidden reality of social media:

- Invisible to users: People don't think about data centers when posting

- Complex infrastructure: Massive technology supporting simple actions

- Keeping world connected: Infrastructure enabling global communication

- Arctic servers: Supporting your vacation photos

- Massive scale required: Six football fields to support 1.65 billion users

- Seamless experience: Users never see the machinery behind it

Key Innovations of the Lulea Data Center

Summary of groundbreaking features:

- Natural cooling: Using Arctic climate instead of air conditioning

- Renewable energy: Hydro-electric power from nearby rivers

- 40% less power: Compared to traditional data centers

- 10% more efficient: Overall system improvement

- 2-minute repairs: Down from 1 hour traditionally

- Open Compute Project: Open-source technology collaboration

- Six football fields: Massive scale facility

- 150 staff: Running entire operation

- Frequently empty: Efficient systems need little maintenance

The Bottom Line

Facebook's Lulea data center represents a remarkable achievement in sustainable, efficient data infrastructure. By choosing a location 70 miles from the Arctic Circle, the company turned extreme cold from a challenge into an advantage.

The facility demonstrates that:

- Smart location choices can dramatically reduce energy costs

- Natural cooling beats air conditioning

- Renewable energy can power massive operations

- Open-source collaboration accelerates innovation

- Design efficiency reduces maintenance needs

- Privacy protection requires physical security measures

Most importantly, it reminds us that every casual Facebook post, every photo shared, every message sent — is supported by incredibly complex infrastructure. While users see a simple app on their phones, behind the scenes are six-football-field facilities in the Arctic forests, keeping 1.65 billion people connected worldwide.

Next time you share on Facebook, remember: somewhere in northern Sweden, servers in a sci-fi-like facility are processing your data, cooled by Arctic air and powered by renewable hydro-electricity — all so you can tag your friend in a meme.